I thought everyone needed one more thing to worry about, so here you go: evolving AI. When I hear this phrase I think of two things. The first are AI systems designed to simulate organic evolution. The second are artificially intelligent systems that are capable of evolving themselves. That latter one is the type you need to worry about.

Systems that simulate evolution already exist – Avida, Biogenesis, Grovolve, Tierra, Framsticks: and others. They basically have some code that competes for some resource or to complete some task and the code randomly mutates and reproduces. That’s it, all you need for an evolution simulation. Code can compete for computer resources, or be a physics simulator with digital creature trying to move quickly across terrain. These are sometime gamified for entertainment, but are also used for serious research, to study patterns within evolutionary systems. I would love to see these kinds of systems get more and more sophisticated, even to the point of reasonably simulating living systems. Such systems could be used to test hypotheses about evolution – and would also disprove a lot of silly creationist talking points.

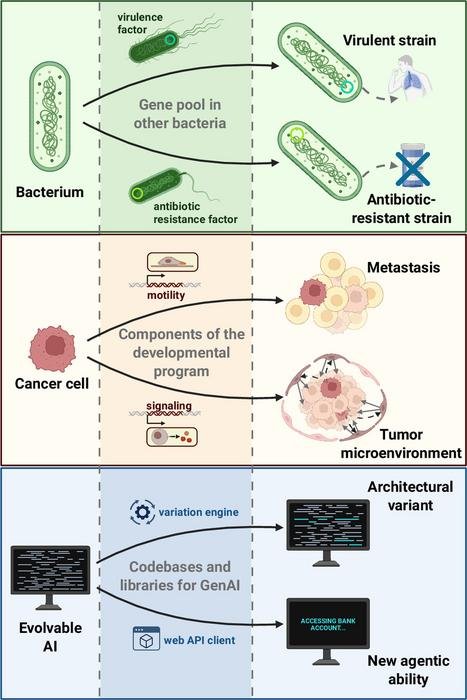

But now we are talking about evolvable AI – AI systems that are capable of developing themselves through evolutionary processes. A new paper in PNAS discusses the potential power and risks of such systems. They echo they kinds of issues that have been explored in science fiction for decades. The authors write: “Evolvable AI (eAI), i.e., AI systems whose components, learning rules, and deployment conditions can themselves undergo Darwinian evolution, may soon emerge from current trends in generative, agentic, and embodied AI.” The results, they argue, have not been adequately addressed when discussing the potential risks of rapidly developing AI ability.

The authors distinguish two types of evolving AI – breeder systems and ecological systems. In breeder scenarios the programmers are in control of the process, selecting which code to “breed” and evaluating the outcome. This process is like a digital version of domestication, and has the potential, if done wisely, to maintain control. In fact, systems can be bred to have greater predictability and control. There are still risks here. So far humanity has not bred an animal to be more intelligent than humans. This could theoretically happen with AI, resulting in emergent behavior not specifically selected for that could get out of the control of human programmers.

A far greater risk, however, is the ecosystem scenario in which the program itself produces variation and selection, without external control. They argue that such systems lead to “selfish replication” which “reliably gives rise to cheating, parasitism, deception, and manipulation, even in very simple systems.” This echos Dawkins’ “selfish gene” in which evolutionary forces result in genes, essentially, doing whatever they can to maximize their passing into the next generation, without consideration for the interests of the whole organism, the population, the species, or the ecosystem. That is how evolution works – it cannot really see the bigger picture, but rather the selective feedback loop considers only survival and reproduction. There is still ongoing debate among evolutionary biologists the extent to which selective pressures can operate at any level other than the individual creature. Dawkins argued it was better understood at the gene level, which is why a parent, for example, would sacrifice themselves for their child – they may die, but the genes live on through their children.

In any case – this same “selfish” principle, when applied to AI, could lead to unpredictable and extremely bad behavior on the part of the AI. They too would not really see or understand the big picture, and will simply maximize whatever parameters they were given. Systems capable of independent evolution are likely to find unpredicted (perhaps unpredictable) solutions to problems, ones that might be anathema to human interests. Again, we are already seeing this is current AI systems (lying, cheating), but this phenomenon would be much greater with evolving systems.

One significant problem with evolving AI is that would essentially be impossible to control. Any controls we put in place would simply become a selective pressure, with evolving AI systems finding creative ways around the controls. This would be exactly like the evolution of antibiotic resistance in bacteria. In fact, it could be a lot worse. Natural systems essentially have to wait for a fortuitous mutation to occur. The reason why bacteria evolve resistance so quick is because there are so many of them and their lifecycle is so short. The opportunities for such mutations are therefore enormous. The same would be true of an AI system that could test billions of possibilities in moments. But also, AI systems do not have to wait for the right mutation to pop up – they can create it themselves. They can explore new possibilities, direct the course of their own evolution, and in fact evolve their ability to do so. They can learn how to optimize randomness vs directed changes, and learn which patterns predict successful evolution. If something doesn’t work, they can try something else. They could pass on acquired characteristics. Such systems would not only be evolutionary, they could be super-evolutionary.

These types of processes can function at multiple levels, not just the code itself. For example, programmers are already using evolutionary methods to evolve prompts for AI systems. Prompts themselves affect the behavior of AI, and when engineered in a sophisticated way can significantly improve an AI’s ability.

The outcome of such systems would be essentially impossible to predict. There would be emergent behaviors that may even be hard to notice, or fully understand. The most predictable thing about such systems is that they will be “selfish”, because that seems to be inherent in evolving systems themselves. The end result is the creation of AI systems that are prone to cheating, lying, parasitism, and manipulation, that we cannot understand or control. If we make such systems powerful enough and give them enough resources, it seems likely that they will eventually become more intelligent (at least in some ways – even short of true sentience) than humans.

The authors also recognize that such systems would be incredibly powerful, and therefore they are coming and can produce useful products. We just have to do it wisely. For example, any such evolutionary AI should be run entirely in a sandbox, isolated from the outside world. It has to be truly isolated, so that it cannot find a way out of the sandbox. Once the result of such an evolutionary AI is sufficiently tested and understood, it can be released. But they warn against running evolutionary systems out in the world where their behavior cannot be controlled. This makes sense, but I wonder if the sandbox method is sufficient. If these systems are prone to deception and manipulation, might one such system trick its users into thinking it is safe, until it is release into the world? That sounds like the plot to a great sci-fi dystopian horror. We may be living through act I of such a horror story right now.

One final word – I get that there is a lot of AI hype our there. This is almost always the case with any new technology that is sufficiently disruptive or game-changing. The existence of hype is a given – it does not mean, however, that the technology is not truly disruptive. It often means that it will just take longer than the hype indicates, but in the long run the hype will not only be realized but exceeded. I do not buy the “AI is all hype” brigade, nor do I buy the “fund me” propaganda or blithe reassurances by the tech bros. The truth is somewhere in the middle. What I mostly listen to are reasonable experts who are given sober warnings, like the authors of the current paper. This technology is genuinely very powerful. That power needs to be respected, understood, and properly regulated. This requires anticipating what can potentially go wrong, and that is what this paper does. This is not a prediction – it is laying out potential worst-case-scenarios so that we do not blindly walk into them.